Sequence-to-Sequence (Seq2Seq) models are at the heart of many natural language processing (NLP) tasks, such as machine translation, text summarization, and dialogue generation. These models are designed to transform one sequence into another, making them essential tools in various AI-driven applications. In this article, we will delve into the detailed process of building and training a Seq2Seq model using C# and ML.NET.

Understanding Sequence-to-Sequence Models

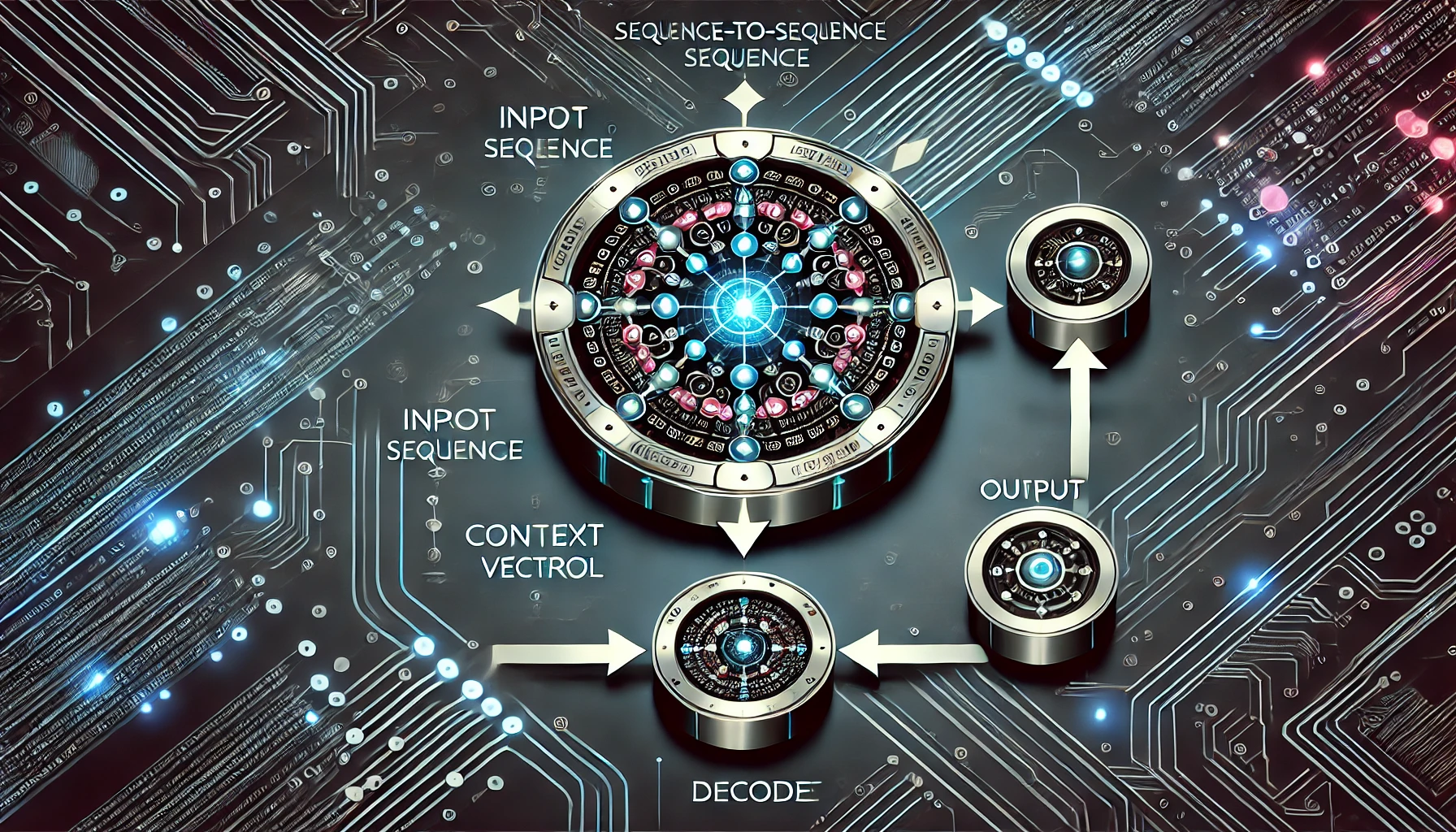

A Seq2Seq model is typically composed of two main parts:

- Encoder: The encoder processes the input sequence (e.g., a sentence) and compresses the information into a context vector (a fixed-size representation). This vector encapsulates the semantic information of the input sequence.

- Decoder: The decoder takes the context vector generated by the encoder and uses it to generate the output sequence (e.g., a translated sentence). The decoder predicts one word at a time, often using the previously predicted word as part of the input for the next prediction.

While modern Seq2Seq models often incorporate attention mechanisms to improve performance, this article will focus on the fundamental structure of Seq2Seq models and how to implement a basic version using C#.

Prerequisites

Before we begin, ensure you have the following tools installed:

- .NET SDK: Download and install it from Microsoft’s official site.

- ML.NET: Install it via the NuGet Package Manager in Visual Studio or by running the following command in your terminal:

bashCopy codedotnet add package Microsoft.ML

dotnet add package Microsoft.ML.Transforms

Step 1: Preparing the Data

Understanding the Data

To train a Seq2Seq model, you need a dataset consisting of pairs of input and output sequences. For example, if you’re working on a machine translation task, the dataset should contain sentences in one language (input sequence) and their corresponding translations (output sequence).

Here’s an example of how you might structure your data:

var trainingData = new List<Seq2SeqInput>

{

new Seq2SeqInput { InputSequence = new[] { "I", "love", "programming" }, OutputSequence = new[] { "J'aime", "la", "programmation" } },

new Seq2SeqInput { InputSequence = new[] { "How", "are", "you" }, OutputSequence = new[] { "Comment", "ça", "va" } },

// Additional training data

};

Defining the Data Structure

We need to define C# classes to represent the input and output sequences that the model will process.

public class Seq2SeqInput

{

public string[] InputSequence { get; set; }

public string[] OutputSequence { get; set; }

}

public class Seq2SeqOutput

{

public string[] PredictedSequence { get; set; }

}

In this structure:

- InputSequence: Represents the sequence of words in the source language.

- OutputSequence: Represents the sequence of words in the target language.

- PredictedSequence: Holds the sequence predicted by the model during inference.

Step 2: Setting Up the ML.NET Pipeline

Tokenization and Feature Extraction

For a Seq2Seq model, it’s essential to convert words into numerical representations that the model can process. This is typically done through tokenization followed by feature extraction.

var context = new MLContext();

// Load data into IDataView

var dataView = context.Data.LoadFromEnumerable(trainingData);

// Define the pipeline

var pipeline = context.Transforms.Conversion.MapValueToKey("Label", nameof(Seq2SeqInput.OutputSequence))

.Append(context.Transforms.Text.TokenizeIntoWords("InputTokens", nameof(Seq2SeqInput.InputSequence)))

.Append(context.Transforms.Text.ProduceWordBags("Features", "InputTokens"))

.Append(context.Transforms.Conversion.MapKeyToValue("PredictedSequence", "Label"))

.Append(context.MulticlassClassification.Trainers.SdcaMaximumEntropy("Label", "Features"))

.Append(context.Transforms.Conversion.MapKeyToValue("PredictedSequence", "Label"));

Explanation of the Pipeline

- MapValueToKey: Converts the output sequence words into keys (numerical values) that the model can process.

- TokenizeIntoWords: Breaks down input sentences into individual words (tokens).

- ProduceWordBags: Generates a feature vector that represents the input tokens.

- SdcaMaximumEntropy: A multiclass classification trainer in ML.NET, used here to predict the next word in the sequence.

- MapKeyToValue: Converts the predicted keys back into their corresponding words.

Step 3: Training the Seq2Seq Model

With the pipeline set up, the next step is to train the model. This is where the model learns to map input sequences to output sequences.

var model = pipeline.Fit(dataView);

Saving the Trained Model

After training, it’s a good practice to save the model so it can be reused without retraining.

context.Model.Save(model, dataView.Schema, "seq2seq_model.zip");

Step 4: Making Predictions

Now that the model is trained, you can use it to generate predictions. For example, let’s translate the sentence “I love programming” into French:

var predictionEngine = context.Model.CreatePredictionEngine<Seq2SeqInput, Seq2SeqOutput>(model);

var input = new Seq2SeqInput { InputSequence = new[] { "I", "love", "programming" } };

var prediction = predictionEngine.Predict(input);

Console.WriteLine($"Predicted Translation: {string.Join(" ", prediction.PredictedSequence)}");

Explanation

- CreatePredictionEngine: Creates an engine that makes predictions based on the input data.

- Predict: Uses the trained model to predict the output sequence for the given input.

The output might be something like:

Predicted Translation: J'aime la programmation

Step 5: Evaluating the Model

Model evaluation is critical to understanding how well your Seq2Seq model performs. Although ML.NET provides several metrics for classification, such as accuracy, you might want to implement custom metrics like BLEU or ROUGE for tasks like translation or summarization.

Simple Evaluation

var predictions = model.Transform(dataView);

var metrics = context.MulticlassClassification.Evaluate(predictions, "Label");

Console.WriteLine($"Macro Accuracy: {metrics.MacroAccuracy}");

Console.WriteLine($"Micro Accuracy: {metrics.MicroAccuracy}");

Advanced Evaluation (Custom Metrics)

For tasks like machine translation, it’s common to use BLEU (Bilingual Evaluation Understudy) scores, which measure the quality of the translation by comparing it to one or more reference translations.

Although ML.NET does not natively support BLEU scores, you can implement a custom function in C#:

public double CalculateBLEU(string[] reference, string[] hypothesis)

{

// Implement BLEU score calculation

// Compare hypothesis against reference and return a score between 0 and 1

return 0.0; // Placeholder for actual implementation

}

You would then iterate through your test data, generating predictions and calculating the BLEU score for each prediction.

Conclusion

Building and training a Seq2Seq model using C# and ML.NET offers a powerful way to tackle complex sequence-based tasks such as language translation, text summarization, and beyond. Although ML.NET’s current support for deep learning architectures like RNNs and Transformers is limited, the approach demonstrated in this article provides a foundation for sequence modeling using existing ML.NET functionalities.

For more advanced Seq2Seq tasks requiring attention mechanisms or more sophisticated architectures, you might consider integrating ML.NET with other libraries like TensorFlow or PyTorch. However, for many real-world applications, the methods outlined here will suffice to build effective and efficient Seq2Seq models.

By following these detailed steps, you can create a robust Seq2Seq model that not only meets the demands of modern NLP tasks but also leverages the power of the .NET ecosystem for building, training, and deploying machine learning models.